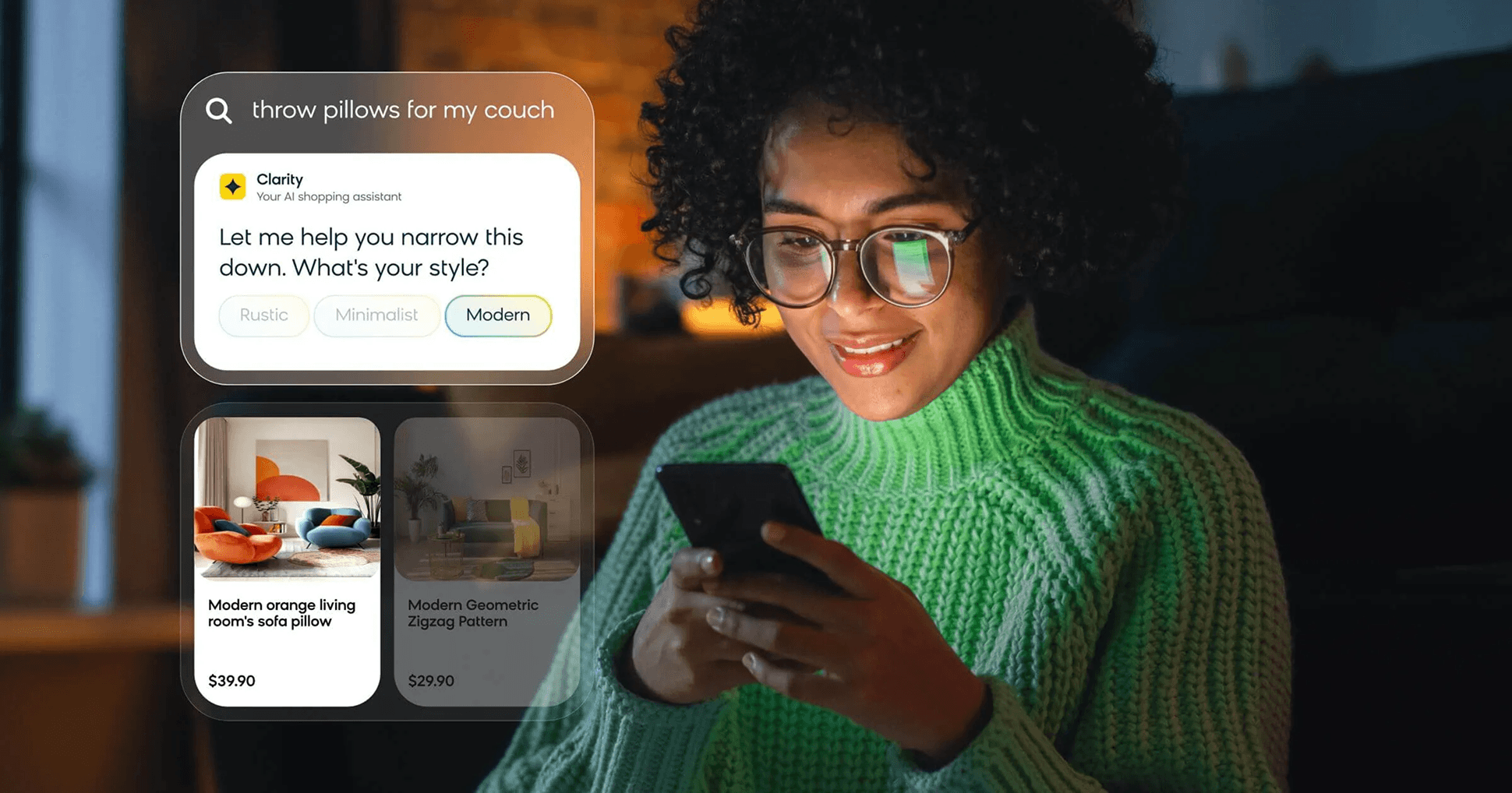

Conversational shopping agent

Designed Bloomreach Clarity, a conversational shopping agent that drove 9% avg conversion lift and $1M ARR in year one

00

problem

Traditional ecommerce search works for known-item queries but breaks down when shoppers don't know exactly what they want. They're left navigating filters and scrolling results alone, leading to high exit rates and missed revenue. The design challenge was two-sided: shoppers needed intuitive guidance in the moment, and merchants needed to see measurable commercial value during time-limited trials before committing to a purchase.

solution

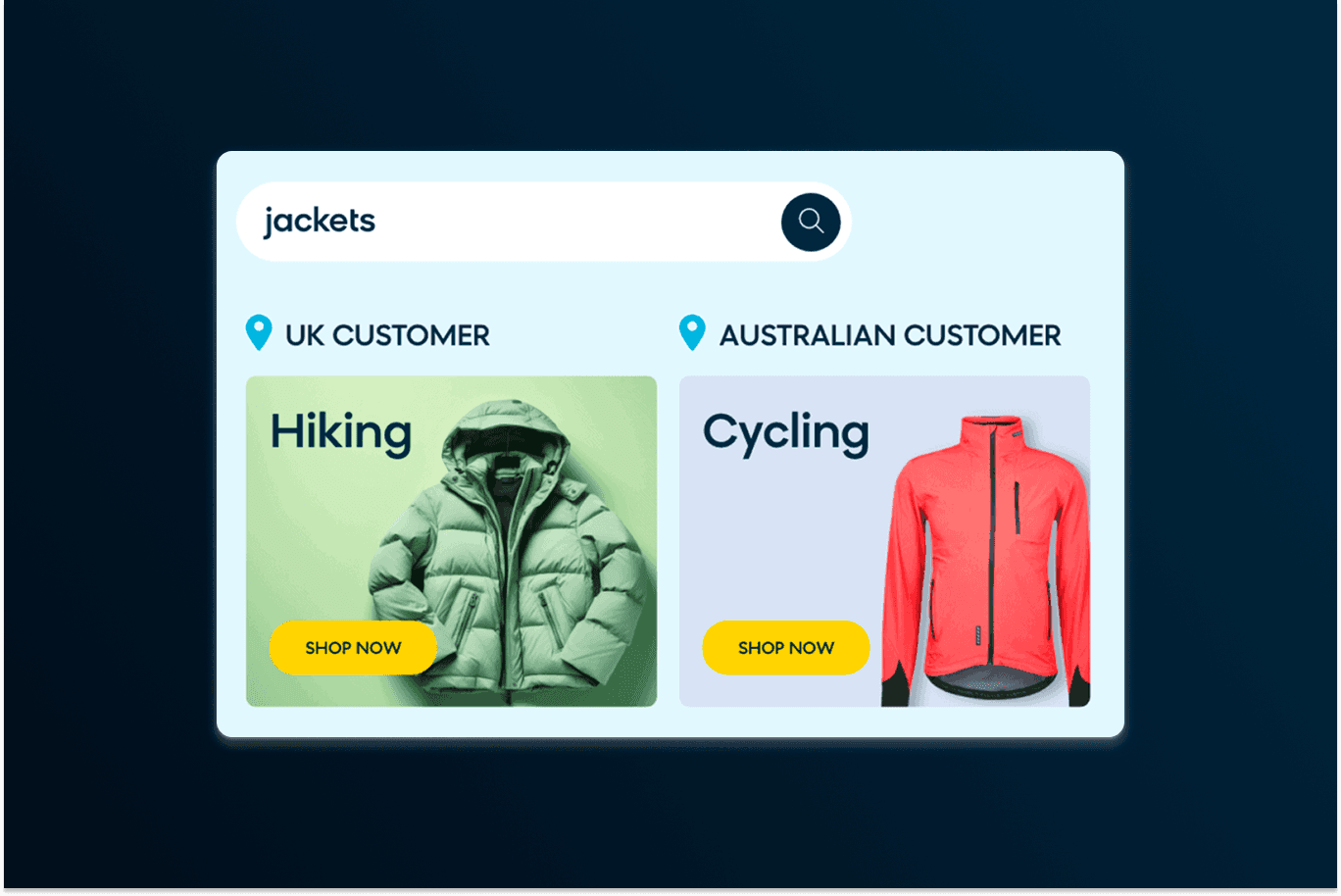

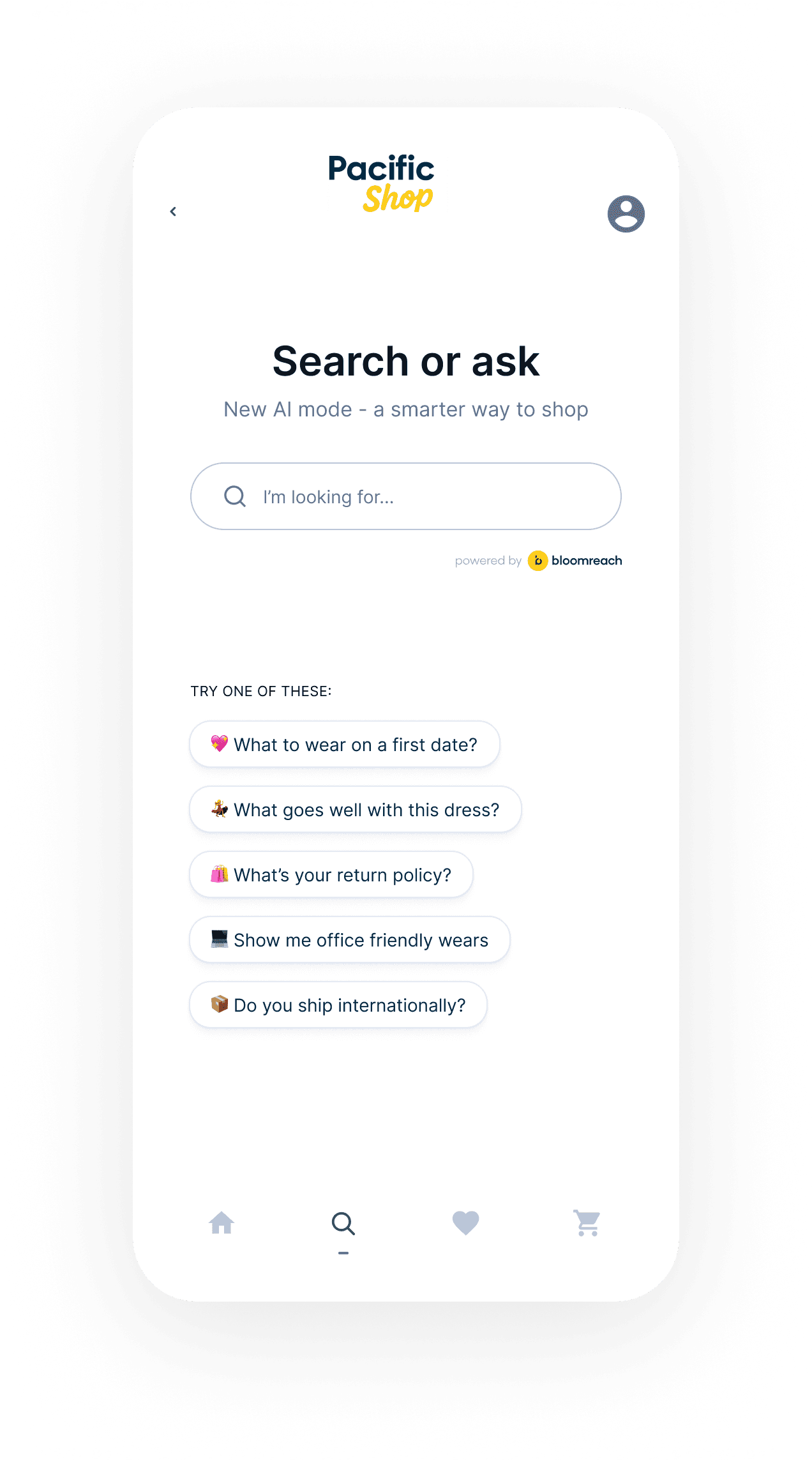

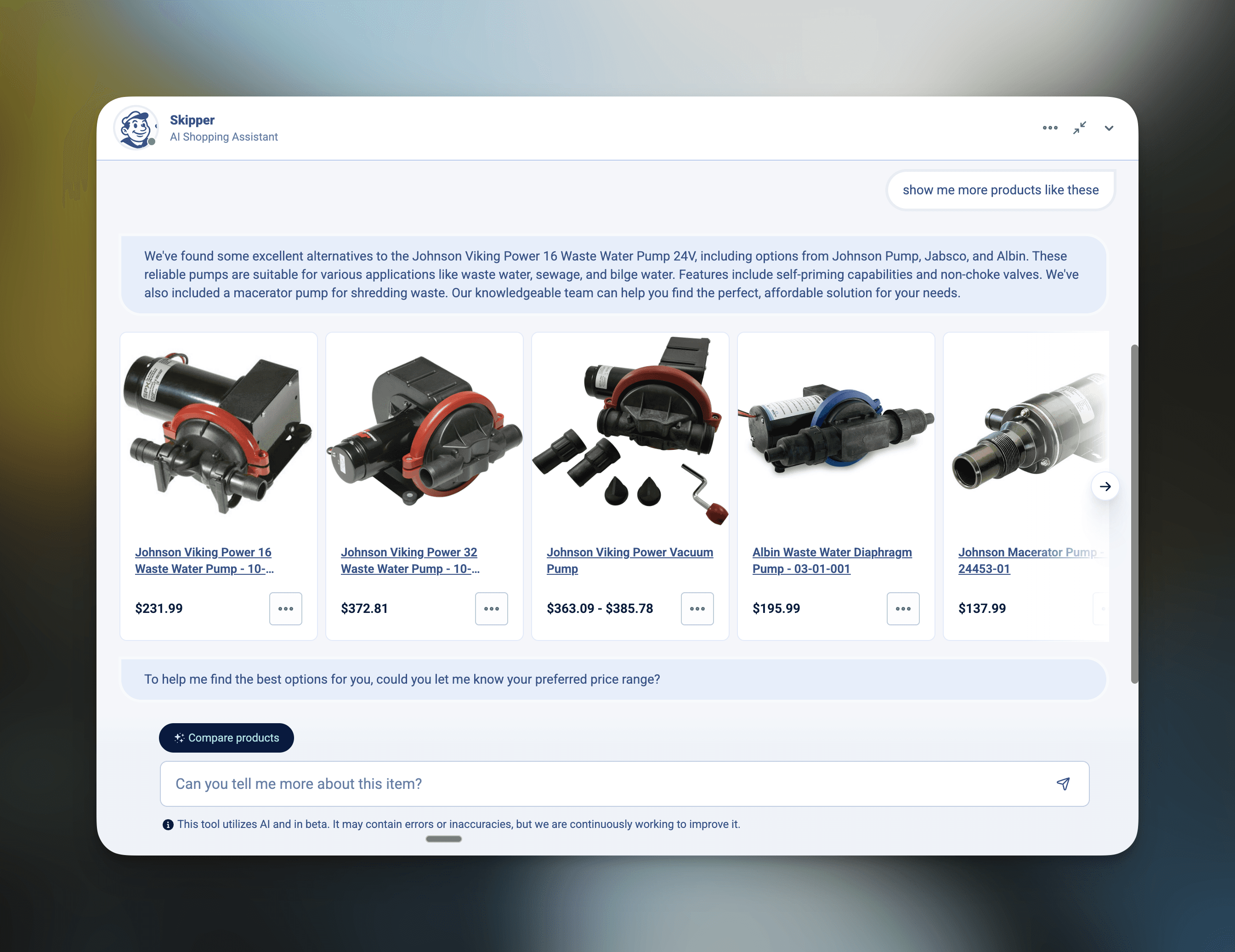

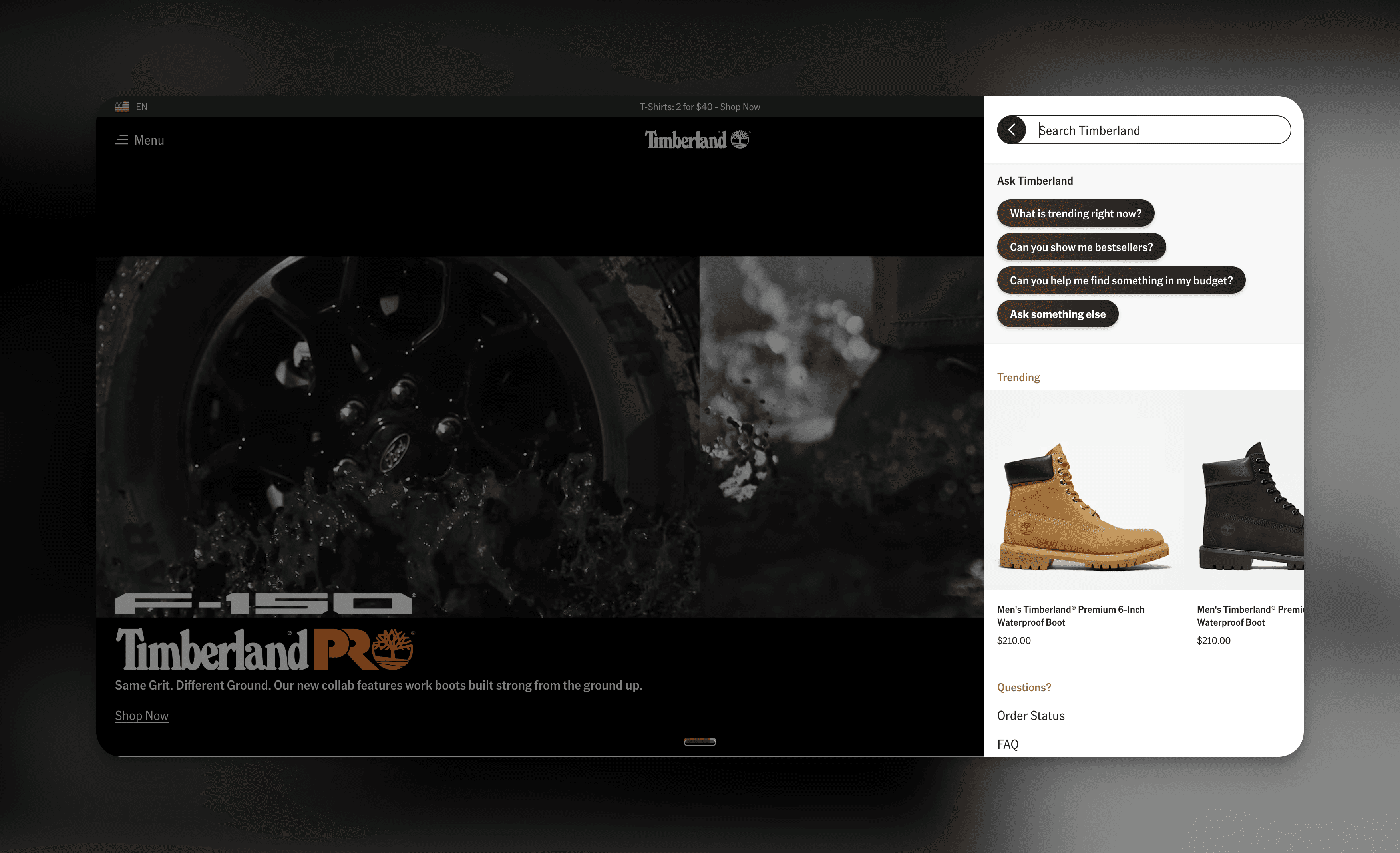

Designed a conversational shopping agent across search, PLP, PDP, and checkout that interprets natural-language queries, asks clarifying questions, and surfaces relevant products. For shoppers: conversations that feel like getting help from someone who knows the store. For merchants: back-office tooling to configure agent goals, tone, and catalog scope, plus an optimization workflow tying conversations to performance metrics so they could see lift during trials and make an informed buying decision.

my role

Staff product designer

industry

Ecommerce

timeframe

Jan 2025 - now

outcome snapshot

- From 0 to GA with $1M ARR in year 1, growing trials and paying customers - Drove avg. +9% conversion rate and +20% AOV across early customers - Designed end-to-end shopper experience, merchant back office, and optimization workflows

project snapshot

Staff product designer on a new AI product at Bloomreach, a leading ecommerce personalization platform. Clarity is a conversational shopping agent that sits across key journey points—landing, search, PLP, PDP, checkout—and connects shoppers to products through natural-language dialogue. I owned design end-to-end: from early customer research and concept validation through shipped product, back-office tooling, and the trial-to-close experience that helped the product hit $1M ARR in its first year.

The challenge of shaping new experiences

New product category: No established UX patterns for conversational shopping agents—no playbook for where they should appear, how they should behave, or how much autonomy the AI should have

Two-sided design problem: Shopper experience had to feel natural and helpful; merchant experience had to demonstrate measurable value quickly during trials, or the deal wouldn't close

Discovery vs. conversion tension: The AI needed to help shoppers explore without becoming a pushy sales tool—finding the right balance between guiding and selling

Speed to market: Competitive pressure meant designing, testing, and shipping in tight cycles while building conviction internally and with early customers

Cross-functional coordination: Worked across product, engineering, data science, customer success, and sales—aligning on what to build, what to promise customers, and what to measure

How to anchor innovation with data & experiment

Customer research: Conducted interviews with ecommerce merchandisers, digital product owners, and CX managers to understand where shoppers struggle most and where merchants see the biggest revenue leakage. Mapped existing shopper journeys across search, PLP, PDP, and checkout to identify high-impact intervention points.

Shopper research: Tested early prototypes with shoppers across categories (fashion, beauty, home) to understand when conversational guidance feels helpful versus intrusive. Surfaced patterns around question types, trust signals, and the threshold where "helpful" tips into "pushy."

Competitive & market analysis: Assessed existing chatbot and guided selling solutions to identify what was already failing, most were keyword-matching FAQ bots, not conversational product advisors. This helped frame Clarity's differentiation.

Trial-based learning: Used early customer trials as a live research environment—analyzed conversation transcripts, tracked engagement and conversion metrics, and ran rapid iteration cycles to improve the experience between trials. This feedback loop was central to finding product-market fit.

Insights that help shaped the product

Shoppers engage most when they're genuinely uncertain, not when they've already decided. The highest-value use cases were exploratory queries and high-consideration purchases, not known-item search.

Merchants cared less about "cool AI" and more about provable commercial outcomes. Trial design and measurement clarity mattered as much as the product itself.

The agent's credibility came from product knowledge depth—knowing specs, compatibility, use cases—not from personality or conversational flair. Shoppers wanted competence, not charm.

Spotlight story

The hardest design tension in Clarity was the gap between what shoppers want and what merchants need to see during a trial. Shoppers want a patient, knowledgeable guide who helps them think, not one that pushes them toward a cart. But merchants running a 30-day trial need to see RPV and conversion lift to justify the investment. If the agent is too passive, the metrics don't move. If it's too aggressive, shoppers disengage.

I spent a lot of time in conversation transcripts working through this. In one early trial, the agent was surfacing product recommendations too quickly—before shoppers had articulated what they actually needed. Engagement was high (people were curious about the AI), but conversion lift was flat. The shoppers who did convert were the ones who'd had multi-turn conversations where the agent asked clarifying questions first—understanding use case, preferences, and constraints before showing products. That pattern became a design principle: earn the recommendation. The agent needed to demonstrate understanding before it could credibly suggest.

We redesigned the conversation flow to lead with clarifying questions and delay product cards until the agent had enough context. That shift moved both shopper satisfaction and conversion metrics. It also reframed how we talked to merchants about the product—not "an AI that sells" but "an AI that helps shoppers buy."

design samples

01

02

03

takeaway thoughts

Designing a 0-to-1 AI product taught me that the hardest problems aren't the AI ones—they're the design problems that sit underneath. How do you make a conversation feel helpful without being intrusive? How do you prove value to a buyer within a trial window without compromising the user experience? How do you design a back-office that makes a complex AI system feel manageable to a merchandiser?

The $1M ARR milestone mattered, but what I'm most proud of is the feedback loop we built: from transcripts and trial data feeding directly into design decisions, which fed into the next trial, which improved the next iteration. That cycle—research, ship, learn, repeat—is what took the product from "interesting concept" to real commercial traction.

Conversational AI in ecommerce is still early. The patterns we established — earn the recommendation, measure what matters to the buyer, give merchants control without overwhelming them — will keep evolving. I'm excited about where this goes next!